Blog Article

Surge Pricing on Stellar: FAQ

Author

Tomer Weller

Publishing date

Surge pricing

Fees

Please note: this blog post was written in 2019. A lot has changed since then. Crucially, CAP-5, which implemented the fee-related changes described below, is now live on the public network, and has been for over a year. Stellar fees are dynamic, and the ledger limit, which is measured in operations/ledger rather than transactions/ledger as this article describes, is currently set to 1,000 operations per ledger. For current info on surge pricing, please see the fees glossary entry in the developer docs.

Over the past few days, a lot of Stellar users have had trouble submitting transactions due to an increase in network activity. Ledgers that are full to the brim, often with failed transactions, have become increasingly common.

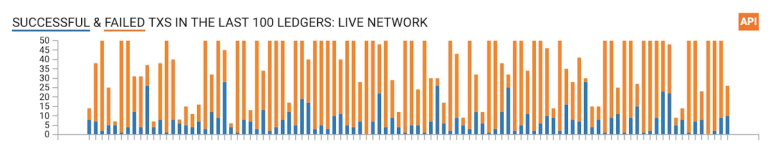

Here’s a snapshot of the last 200 ledgers as of writing these lines, from the Stellar dashboard. As you can see, about a quarter of the ledgers are maxed out at 50 transactions:

The Stellar protocol was built to handle network congestion and ensure that legitimate transactions make it to the network quickly. The mechanism responsible for that is called Surge Pricing and it has been working flawlessly. We realize, however, that Surge Pricing isn’t very well documented at the moment, so we put together this FAQ to explain what it is and how it works, and to let you know what you can do to adjust your network fees to take advantage of it.

Is Stellar under attack?

No! Stellar is being used and is working as expected.

So what are all these new transactions on the network?

Besides an increase in regular operations, like payments and orderbook activity, we’ve also been seeing a lot of arbitrage attempts through path payments. These path payments try to take advantage of imbalances between the various order books. For example, if I trade some XLM for USD, then to EURT and back to XLM, I might end up having more XLM than I started with. This can happen because of imbalances between the three markets (XLM/EURT, EURT/USD and XLM/USD). Arbitrage is a good thing! It ensures that markets are balanced and reflect real world supply and demand.

OK. So how many transactions per second does Stellar actually support?

Stellar is unique in that it allows for multiple operations, up to 100, to be included in a single transaction. For example, I can send 100 individual payments by submitting a single transaction. The public Stellar network is currently configured with a limit of 50 transactions per ledger which, with operation batching, can contain up to 5000 operations. Ledgers close on average every 5 seconds, so that’s a limit of 10 transactions, or 1000 operations, per second.

And what happens when more than 50 transactions are submitted for a ledger?

The network enters Surge Pricing mode, which is a way to enable market dynamics for fees to decide which transactions should be included in a ledger. In this mode, the 50 transactions that offer the most fee per operation will make it into the ledger. If this includes more than 50 transactions, for example if 51 transactions offer the same fee per operation, the transactions will be (pseudo-randomly) shuffled and the first 50 taken. The rest of the transactions, the ones that didn’t make the cut, are pushed on to the next ledger, or discarded if they’ve been waiting for too long.

But that means that a small transaction, containing one operation, counts the same as a big transaction with 100 operations?

Yes, but that’s going to change. CAP5, titled “Throttling and transaction pricing improvements”, which has already been accepted, will change the transaction per ledger limit to an operations per ledger limit. This will create more consistency in size across full ledgers and give validators better control over setting limits.

And why 50 transactions per ledger, can’t we just increase that limit?

The transactions per ledger limit (or in the future operations per ledger limit) is decided in a distributed manner by the validators in the network through SCP. If a sufficient number of validators decide to change that value, it can be changed. This configuration value needs to balance between several core principles of the network: performance, decentralization and inclusiveness. It should be high enough so that the network can support an increasing volume of activity but low enough so that nodes across the world with access to lower end hardware and slower connectivity can still keep up (for watcher nodes supporting systems like Horizon) and participate in consensus (for validators, key for decentralization).

Do failed transactions count to that limit?

Depends. There are two steps during which transactions can fail: validation and consensus. If a transaction fails during validation, for example if it doesn’t have sufficient signatures, it will not be submitted to consensus and doesn’t count towards the transactions per ledger limit. If a transaction is submitted for consensus, it will count towards that limit and have its fees taken even if it fails. For example, if I submit a payment transaction that is well formed and signed it can fail during consensus if I don’t have a sufficient balance.

In fact, most of the arbitrage path payment operations that we’re seeing these days are failing during consensus, and that’s why ledgers are full.

Fees? You said Stellar is free!

Not exactly. We said it’s cheap :) The minimum operation fee is another parameter that can be configured by consensus and is currently at 100 stroops (a stroop is the smallest supported unit of XLM, it’s 0.0000001 XLM to be exact), that’s 0.00008 US CENTS. That’s cheap.

Actually, it’s too cheap. It allows people to write scripts that submit as many transactions as the network can handle, even when they’re likely to fail. Which is exactly what the arbitrage bots are doing.

However, the above is just the minimum operation fee. You can offer as much as you want to ensure that your transaction makes it to the network.

But my Stellar wallet doesn’t even let me choose my fee.

Historically, surge pricing was a rare event on the network so wallets have always submitted the minimum operation fee. Going forward, wallet developers will need to form their own fee strategy. They can choose to observe the current fee stats of the network (which we are in the process of improving) and make an informed decision, or alternatively, consult with the user. The common user will probably not care if they’re paying 0.0008 cents or 0.00008 cents (did you even notice the extra 0?) so we encourage wallets to be mindful of the user experience.

As for bot developers that submit a large volume of transactions, they will have to take into consideration the fee economics and weigh it in their strategy.

Will I pay the extra fees even if the network is not in Surge Pricing?

Yes. With the current version of the protocol you will always pay the fee per operation times the number of operations in your transaction.

This will change in the future. CAP5 introduces “dutch auction” fee payments which ensure that the operation fee you submit is the maximum you will pay and the actual amount is the lowest possible.

Will the minimum operation fee ever go up?

Probably Yes. This is up to the validators, but the general sentiment is that these need to be higher. However, there are many presigned transactions out there with the current minimum, which would be invalidated, so the change should be done with this in mind.

What is the SDF doing to mitigate this situation?

We’re always hard at work scaling the Stellar network in preparation for increased transaction volume.

The core engineering team is continuously improving the performance of stellar-core. The 10.1.0 release included a reworked database access layer, which improved transaction processing rates and laid groundwork for more aggressive performance work in subsequent revisions. The upcoming 10.3.0 release will build on that by allowing database operation batching, which shows performance improvements across the board.

The Platform engineering team is adding failed transaction ingestion to Horizon so that failed arbitrage transactions can properly be accounted for. The next version of Horizon will expose detailed fee statistics (UPDATE 02/26/2019: Horizon 0.17.0 was released with fee stats, which are now reflected in the Dashboard) , allowing wallets to use dynamic fee strategies and greatly cut down on hung transactions. These fee strategies will be included in the next versions of all SDF maintained SDKS: Go, Java, Javascript.

The partnership team is letting people know what’s going on, and working closely with wallets and exchanges to make sure they have strategies in place to structure their fees. If you have any questions about how Surge Pricing might affect the app or business you built on Stellar, reach out to them, or ask on the stellar.public Keybase, which is the best place to interact with Stellar developers.